DexUMI: Visuo-Tactile Manipulation

Imitation learning for home assistance tasks

In Progress

Northwestern University

Overview

This project develops a visuo-tactile imitation learning system for the Hello Robot Stretch 3, enabling users to teach manipulation tasks through human demonstration. The core insight is that by designing the UMI(Universal Manipulation Interface) to be geometrically matched to the robot’s own gripper, the cross-embodiment challenge can be reduced. The system captures synchronized vision, proprioception, and tactile feedback, then prepares the data to hugging face to be loaded to train ACT and Diffusion policies using the LeRobot framework.

Cross-Embodiment

A central challenge in robot learning from demonstration is the embodiment gap - human hands and robot grippers have different kinematics, so translating demonstrations requires retargeting that introduces error. DexUMI approaches this challenge by using the existing DexWrist3 gripper and dettaching it collect demonstrations.

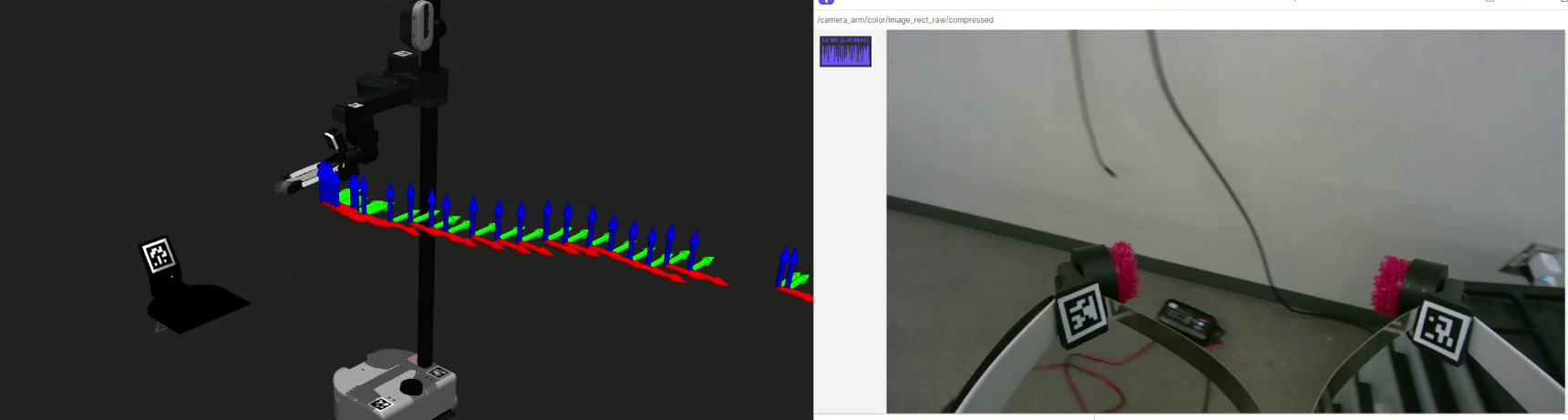

DexUMI is a custom handheld teleoperator built around the same parallel gripper used on the Stretch 3. Because the operator and robot share identical gripper geometry, opening and closing in the human’s hand maps one-to-one to the robot. The D405 camera and eFlesh tactile sensors geomtric setup is 1:1 identical since the gripper is simply attached as part of the UMI during demonstratoin. This enables the Visuo-Tactile signals recorded during demonstration directly correspond to what the robot will sense during deployment.

Tactile Sensing: eFlesh Integration

Many manipulation tasks fail when vision alone is insufficient — contact forces during insertion or grasping are invisible to any camera. This project integrates eFlesh, an existing soft tactile sensor system, to address this. Each fingertip embeds 5 three-axis magnetometers, providing a rich tactile signal that captures contact location and force direction.

Each sensor array is read by a QT Py microcontroller over USB serial. A custom ROS 2 node aggregates and time-stamps the tactile stream alongside joint encoder and camera data, publishing all modalities for recording and policy inference.

Retargeting

Human arm motion during demonstrations is captured via the DexUMI device and retargeted during inference to the Stretch 3’s joint space in real time. The retargeting uses a Jacobian-based damped least-squares IK that maps end-effector pose commands into lift, arm, and wrist joint velocities. This project applies episode relative relative joint actions (Δq) rather than absolute positions, to improve generalization across demonstrations that start from slightly different configurations.

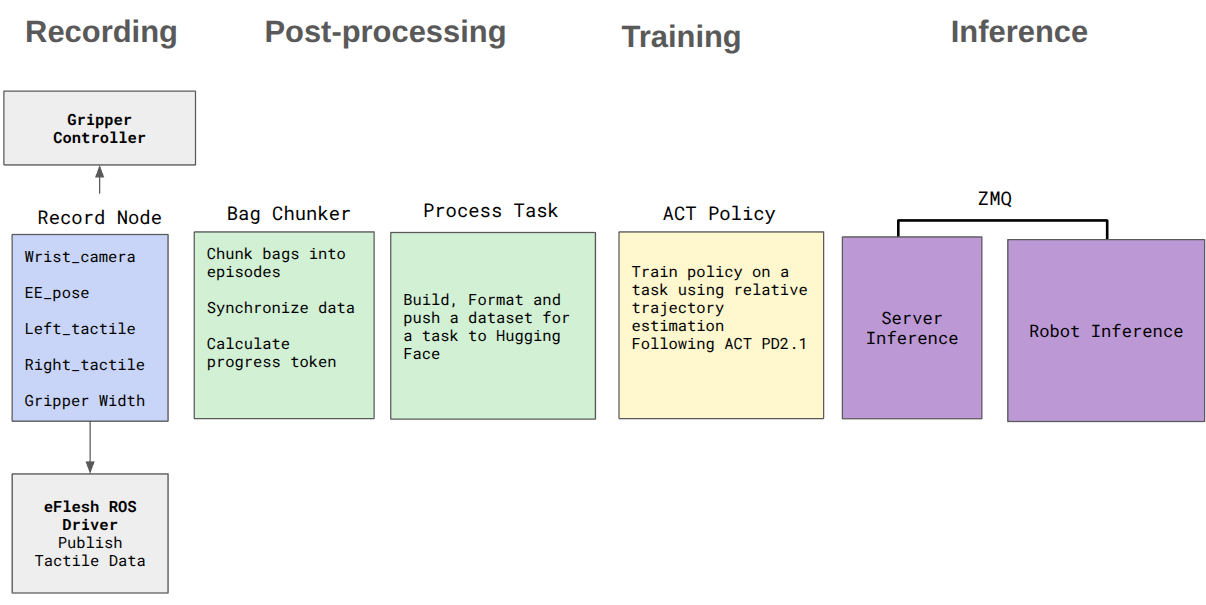

Recording Pipeline

Demonstrations are recorded as synchronized streams of three modalities:

- Vision —640×480 RGB resized to 320x320 from the gripper-mounted camera, transmitted over ZMQ

- Proprioception — joint states from lift, arm, wrist yaw/pitch/roll, and gripper 7DoF

- Tactile — 15-dimensional eFlesh signal per finger at full sensor rate

Raw rosbags are processed through a chunking and conversion pipeline into HuggingFace-compatible datasets at 10 fps, then uploaded for training. ArUco markers on the gripper provide end-effector pose ground truth for each frame.

Demonstrations

Policy Learning

Recorded demonstrations are converted into a LeRobot-compatible dataset and uploaded to HuggingFace for training with ACT.

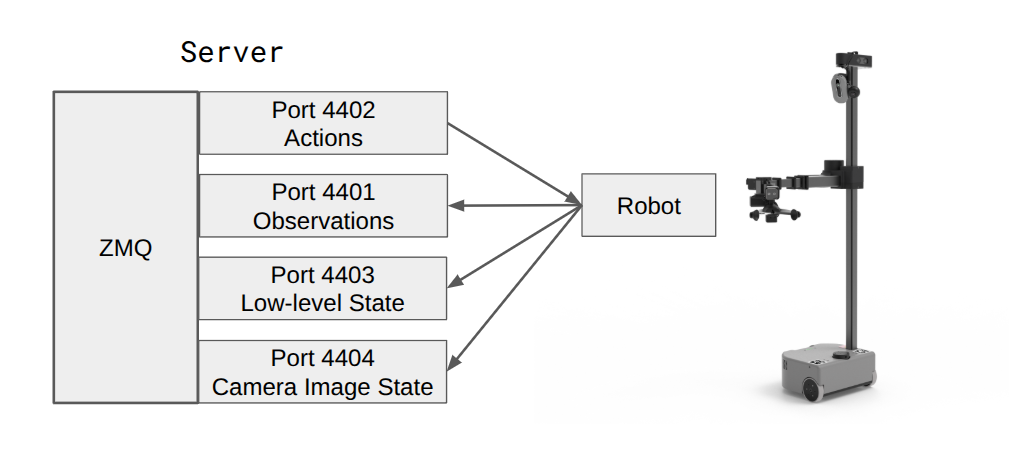

Rollout architecture — policy runs on a remote GPU server and streams joint commands to the robot over ZMQ:

Next Steps

This project has succefully built a pipeline to take human demonstrations and turn them into a deployable policy that executes on the robot. The final stages of the project are in progress to refine the pipline . To fix the current issue with noisy wrist rotation data expressed in the policy an IMU is being intetgraed into the DexUMI.

The video shows a representative rollout: the robot attempts to pick up the cup but executes an out-of-distribution wrist rotation, knocking it over instead.

ACT rollout2

BibTeX

@article{pattabiraman2025eflesh,

title={eFlesh: Highly customizable Magnetic Touch Sensing using Cut-Cell Microstructures},

author={Pattabiraman, Venkatesh and Huang, Zizhou and Panozzo, Daniele and Zorin, Denis and Pinto, Lerrel and Bhirangi, Raunaq},

year={2025}, archivePrefix={arXiv}, eprint={2506.09994}

}

@inproceedings{chi2024universal,

title={Universal Manipulation Interface},

author={Chi, Cheng and Xu, Zhenjia and others},

booktitle={Robotics: Science and Systems}, year={2024}

}

@article{zhao2023learning,

title={Learning Fine-Grained Bimanual Manipulation with Low-Cost Hardware},

author={Zhao, Tony Z and Kumar, Vikash and Levine, Sergey and Finn, Chelsea},

journal={arXiv:2304.13705}, year={2023}

}

@article{chi2023diffusion,

title={Diffusion Policy: Visuomotor Policy Learning via Action Diffusion},

author={Chi, Cheng and Feng, Siyuan and others},

journal={arXiv:2303.04137}, year={2023}

}